Estudios originales

← vista completaPublicado el 15 de mayo de 2026 | http://doi.org/10.5867/medwave.2026.04.3219

Calidad de reporte de revisiones sistemáticas con metaanálisis sobre la exactitud diagnóstica de test rápido de antígenos para el SARS-CoV-2 según PRISMA-DTA: Un estudio metaepidemiológico

Quality of reporting of systematic reviews with meta-analysis of diagnostic test accuracy of rapid antigen tests for SARS-CoV-2 according to PRISMA-DTA: A meta-epidemiological survey

Abstract

Introduction Systematic reviews are crucial for informing health decisions and supporting evidence-based policymaking. Reporting guidelines aim to reduce ambiguity and confusion while promoting clarity, completeness, and transparency in reporting. Our study aimed to assess the completeness of reporting of diagnostic test accuracy systematic reviews with meta-analysis on rapid antigen tests for SARS-CoV-2 deployed during the COVID-19 pandemic using the PRISMA-DTA guideline.

Methods We conducted a meta-epidemiological survey of systematic reviews with meta-analysis of rapid antigen tests for SARS-CoV-2. We searched MEDLINE/PubMed, EMBASE, L·OVE Covid-19, and Web of Science Clarivate, covering the period from inception to April 3, 2025, with no language restrictions. We included reviews that used explicit systematic review methodologies with summary estimates of test sensitivity and specificity. We assessed compliance with the 27 PRISMA-DTA items.

Results After screening 5252 publications, we included 38 reviews. We found no PRISMA-DTA item with a low reporting frequency. Regarding the number of items reported, 23 (60%) of the included studies reported over 66%, and 15 (40%) reported between 33% and 66%, with none reporting fewer than 33%. None of the included reviews complied with the full PRISMA-DTA checklist.

Conclusions Our meta-epidemiological survey reveals persistent shortcomings in the reporting quality of systematic reviews evaluating rapid antigen test accuracy for SARS-CoV-2. While some items were consistently addressed, numerous critical domains requiring a deeper understanding of the specific diagnostic test accuracy assessment methods showed low reporting adherence.

Introduction

Systematic reviews search and synthesize the best available evidence that addresses a clinical question related to therapy, screening, diagnosis, or harm, following a pre-specified plan previously published or registered as a protocol [1]. Systematic reviews are crucial for informing health decisions and supporting evidence-based policymaking. However, systematic reviews are only as good as the primary studies that they include. Some factors that can render primary studies unusable include study questions that do not correlate with health burden, poor design and conduct, and unclear, inaccurate, and incomplete reporting of results [2]. Some prominent scholars have suggested that 85% of health research is wasted [3] and that poor reporting is unethical, negatively impacting patient care [4]. The drive to reduce waste from incomplete or unusable reports of biomedical research led to the development of a wide variety of reporting guidelines to improve the quality of research reports [5]. Reporting guidelines aim to reduce ambiguity and confusion while promoting clarity, completeness, and transparency in reporting [6] and can be retrieved at the EQUATOR Network for most study designs [7].

Systematic reviews are published at an increasingly high rate. An observational study estimated that 29,000 systematic reviews were published in 2019 alone, averaging 80 per day [8]. Between 2020 and 2022, 159,657 systematic reviews were published, peaking at 128 per day in 2022 [9]. This proliferation and the growing demand for evidence syntheses on current topics requiring rapid decision-making, as in a pandemic, have resulted in a new form of evidence synthesis: overviews of systematic reviews [10]. Overviews use systematic reviews as the unit of analysis to extract and analyse the results to describe the current body of evidence from systematic reviews on a topic of interest or to address a new review question [11]. Just as systematic reviews depend on including well-designed, conducted, and reported primary studies, overviews also require well-designed, performed, and reported systematic reviews.

In 2009, the Preferred Reporting Items for Systematic reviews and Meta-Analyses (PRISMA) was developed and subsequently updated to its most recent 2020 version to address poor reporting in systematic reviews of intervention studies. The 2020 version comprises seven sections with 27 items, some of which include sub-items [12]. Similarly, systematic reviews that synthesize evidence on diagnostic tests are becoming increasingly important, enabling clinicians to make evidence-based decisions in diagnostic workups and to inform health policies, clinical guidelines, and pathways. Ultimately, the need for transparent and accurate reporting of systematic reviews on diagnostic test accuracy led to the development of a PRISMA extension called PRISMA-DTA, which accounts for the methodological specificities of this type of review [13,14].

The COVID-19 pandemic led to widespread use of testing for SARS-CoV-2. The rise in cases and the need to enact mitigation strategies spawned a slew of public health measures and a surge in systematic reviews on screening, diagnostics and interventions. The methodological quality of these pandemic publications was found to be predominantly low and critically low [15]. A study using the ROBIS tool to assess the overall quality of systematic reviews published during the pandemic, regardless of topic, found that both the COVID-19-related and the non-COVID-19-related ones were of lower quality than those published before the pandemic [16]. This increased production of systematic reviews on COVID-19 also included dozens assessing the diagnostic accuracy of rapid antigen tests (RATs) for SARS-CoV-2 to evaluate how they fared against the gold standard, RT-PCR.

Since policy decisions during the pandemic were made based on what should be high-level evidence, systematic reviews that assessed the diagnostic accuracy of RATs should have been reported completely, transparently, and accurately. However, a study on the completeness of reporting of diagnostic test accuracy (DTA) systematic reviews published the year before the pandemic showed that they were not fully informative when evaluated against the PRISMA-DTA guidelines [17]. Our study aimed to assess the completeness of reporting of DTA systematic reviews with meta-analysis on rapid antigen tests for SARS-CoV-2 deployed during the COVID-19 pandemic using the PRISMA-DTA guideline.

Methods

We conducted a meta-epidemiological survey of systematic reviews with meta-analysis of RATs for SARS-CoV-2. This project was based on an overview protocol registered in PROSPERO (CRD42023408442), which aimed to address four predefined study questions. The first three questions were addressed by assessing the methodological rigor and risk of bias of the reviews and the potential factors associated with between-review methodological variability. These reviews were retrieved through a search using MEDLINE/PubMed, EMBASE, L·OVE Covid-19, and Web of Science Clarivate, covering the period from inception to July 10, 2023, with no language restrictions. Results are reported in a published article [18].

The current study focuses on the fourth question of the registered protocol, which involves assessing the quality of systematic reviews reporting in this population by contrasting them against the PRISMA-DTA reporting guideline. We complemented the previous search with an updated one only in MEDLINE/PubMed, covering the period from July 10, 2023, to April 3, 2025, using the same search strategy. The updated search was restricted to PubMed because of its proven robustness in the initial search and supporting evidence from the literature [19]. The full search strategies are available as supplementary material in a public repository [20].

To be included in the overview, the reviews had to fulfil the following criteria: (1) description of the methods and criteria used to search and identify primary studies; (2) explicit methods to extract and analyse the findings from the included primary studies; and (3) include a summary estimate of sensitivity and specificity.

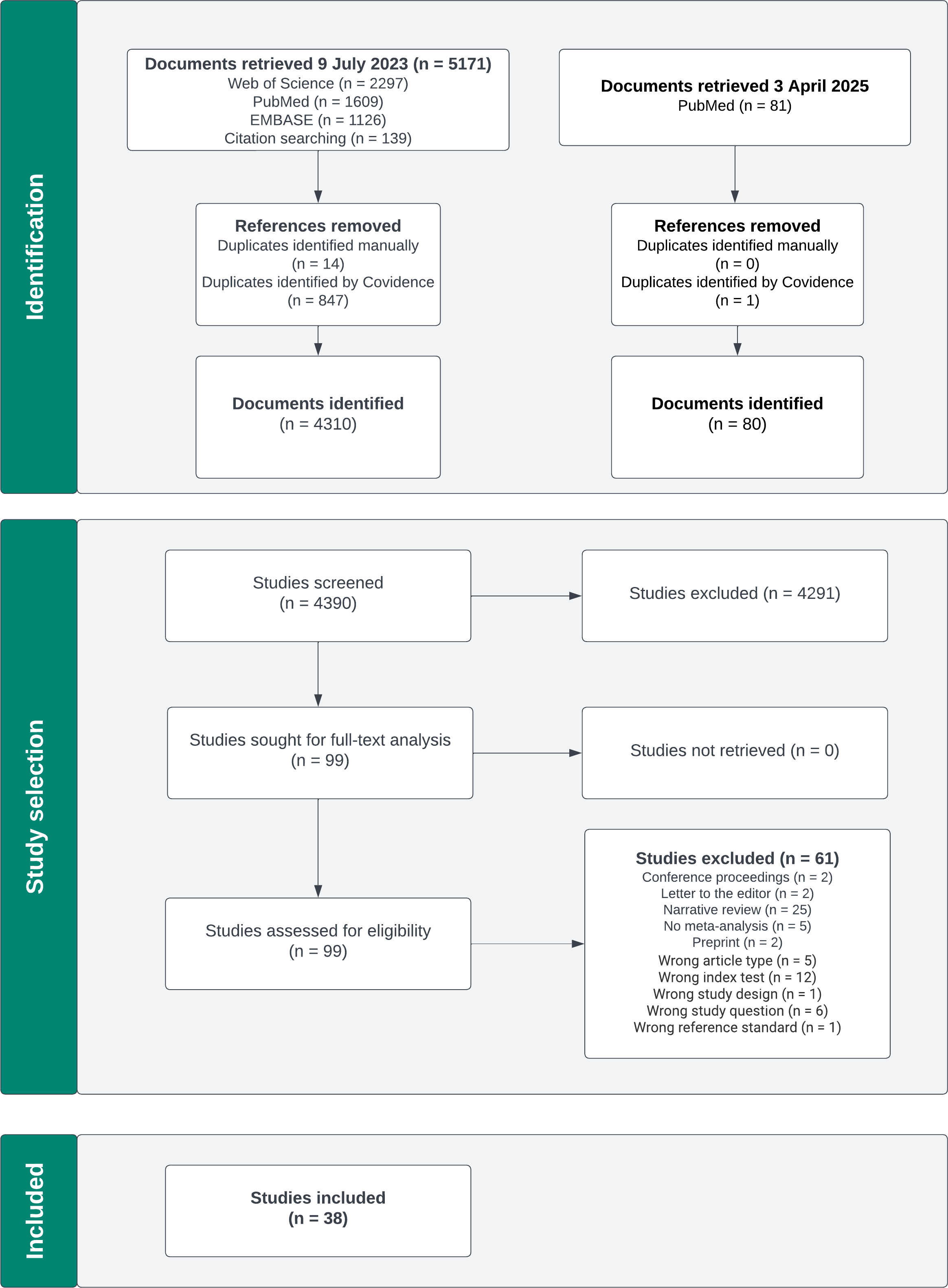

All search results were imported into Covidence [21] for screening and deduplication. Two reviewers independently and in parallel screened titles and abstracts according to predefined inclusion and exclusion criteria. A third reviewer resolved any discrepancies. Full texts were then assessed for eligibility, and articles that did not meet the criteria were excluded. Reasons for exclusion were reported in the PRISMA flowchart.

We extracted the following data on study characteristics: year of publication, country of the first author, region, journal, journal’s impact factor, presence of supplementary material, PRISMA adoption, PRISMA citation, setting, population characteristics, sample size, summary sensitivity and summary specificity.

We assessed compliance with the 27 PRISMA-DTA items. When an item contained more than one concept, we expanded it following a discussion by the research team, ultimately resulting in 79 subitems. The full extraction table, including the signalling questions, is available as supplementary material.

We scored each item as 1 or 0 to assess individual item compliance, where 1 indicated compliance and 0 indicated non-compliance. Any item considered "not applicable" was scored 1, per Salameh et al. [17]. To evaluate the PRISMA-DTA items, which we operationalized into subitems, all subitems were required to be present for the item to be scored as compliant; if any subitem was missing, the entire item was scored with 0, indicating non-compliance.

To assess compliance of all included studies, we categorized reporting completeness as "infrequently reported" when items were reported in less than 33% of the studies; "moderately reported" when items were reported in 33% to 66% of the studies; and "frequently reported" when reported in over 66% of the studies [17].

To ensure the quality of the data extraction process, the senior investigators trained the data extractors in weekly sessions using the PRISMA-DTA checklist, as outlined in the explanatory paper [20]. A pilot extraction of one of the included reviews was conducted using our data extraction sheet (Google Sheets, Google LLC). Five reviewers (JH, BM, PIOG, CES, SVV) working in pairs independently and in parallel extracted the data from each systematic review. Discrepancies were resolved by consensus among the reviewers and subsequently through discussion within the study team (VCB, FJL, NM, and MV).

Results are presented with summary statistics, figures and tables.

The first search in 2023 retrieved 5171 articles, and the second in 2025 retrieved 81 articles. After removing duplicates and screening by title and abstract, 38 systematic reviews were ultimately included. The PRISMA flowchart is shown in Figure 1, and the complete list of the 38 included reviews is available as supplementary material.

PRISMA flowchart.

Most of the included reviews were conducted in Asia, mainly in Taiwan, whereas in Europe, they were primarily conducted in the United Kingdom. Due to the nature of the topic, all the studies identified were published in 2020 or later, with a majority published in 2022. Most sensitivity summaries ranged between 60.0% and 79.9%, while specificity values ranged between 99.0% and 99.9%. The main characteristics of the included articles are summarized in Table 1. The full data extraction table with a detailed description of each review is available in the supplementary material.

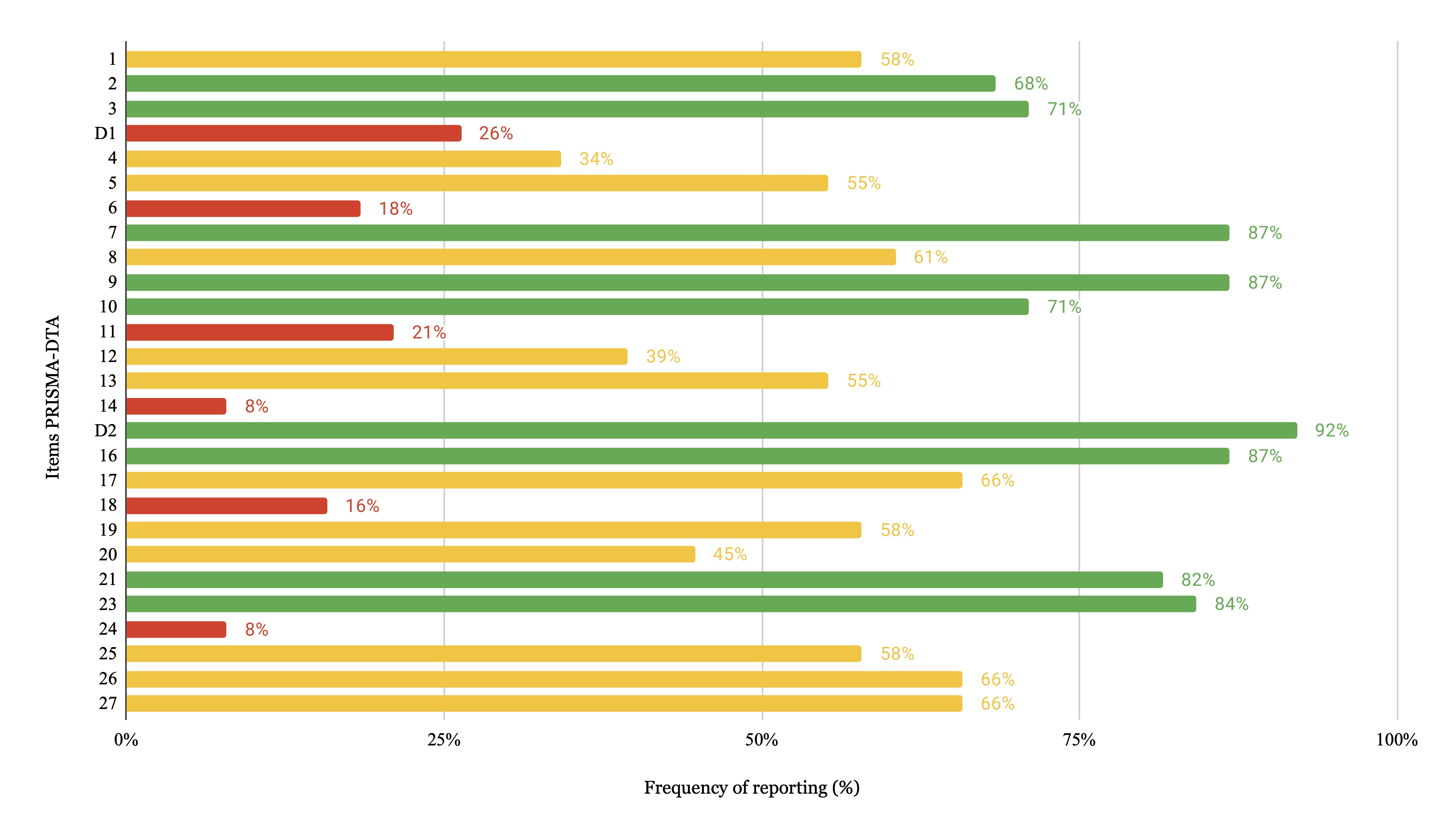

When analysing all 38 included studies, we found no item had a low reporting frequency. Figure 2 shows the reporting frequency of each PRISMA-DTA item. The data extraction table with the frequency of PRISMA-DTA items is included in the supplementary material.

Frequency of reporting of each PRISMA-DTA item.

Items related to the title (Item 1) and abstract (Item 2) showed moderate levels of adherence, each being reported in approximately half of the included studies. In contrast, the rationale (Item 3), clinical role of the index test (Item D1), and objectives (Item 4) were frequently reported; the rationale was the most consistently reported.

Within the methods section, protocol and registration (Item 5), search strategy (Item 8), data collection process (Item 10), definitions for data extraction (Item 11), and synthesis of results (Item 14) were moderately reported, with the last one being the lowest. In contrast, information sources (Item 7) had an outstanding reporting frequency of 99%. Eligibility criteria (Item 6), study selection (Item 9), risk of bias and applicability (Item 12), diagnostic accuracy measures (Item 13), meta-analysis (Item D2) and additional analyses (Item 16) were frequently reported, though with some variability in their completeness.

The results section was among the most consistently reported, with five out of six items frequently addressed. The only exception was study characteristics (Item 18), which was moderately reported. This finding was mainly due to the low reporting frequency of its sub-item related to funding sources. In contrast, study selection (Item 17), synthesis of results (Item 21), and additional analyses (Item 23) were each reported in over 90% of studies, followed closely by risk of bias and applicability (Item 19) and results of individual studies (Item 20), which were also frequently reported.

The summary of evidence (Item 24) was moderately reported in the discussion section. When analysing its subitems, the main findings were frequently summarized; however, aspects such as the strength of evidence were less commonly discussed, which reduced the overall reporting quality of this item. Limitations (Item 25) were frequently reported, particularly those related to the included studies; however, limitations regarding the review process were less frequently discussed throughout the studies. In contrast, conclusions (Item 26) were generally reported across the studies.

Funding (Item 27) was moderately reported. Although most reviews disclosed funding sources, the specific role of the sponsor was not consistently described.

Regarding the number of items reported, 23 (60%) of the included studies reported over 66%, and 15 (40%) reported between 33% and 66%, with none reporting fewer than 33%.

We found that 37 reviews adopted PRISMA as their guiding framework; however, when analysing the references, only 29 studies cited the PRISMA statement in their bibliography, leaving nine that omitted it from their cited sources.

Discussion

Our meta-epidemiological overview reveals persistent shortcomings in the reporting quality of systematic reviews evaluating rapid antigen test accuracy for SARS-CoV-2. Although some reviews reported more than two-thirds of the items, none complied with the full PRISMA-DTA checklist, and adherence remained suboptimal, with 40% of the included reviews below this threshold (i.e., reviews with less than 66% of compliance) and persistent gaps in some key domains.

While several items were adequately reported (including the abstract, rationale, information sources, methods for study selection and data collection, as well as the methods and results of meta-analyses and additional analyses) reporting adherence was notably low in a critical domain that requires in-depth understanding of DTA-specific methodology: the synthesis of results. This includes aspects such as handling multiple definitions of the target condition, pre-specifying thresholds for test positivity, managing discrepant interpretations by different test readers, and appropriately reporting indeterminate results. On the other hand, items related to the introduction (specifically on the clinical role of index test), methods for both eligibility criteria and definitions of data extraction, and results for both study characteristics and summary of evidence were frequently omitted. These gaps limit diagnostic reviews' transparency, reproducibility, and decision-making utility, especially during a public health emergency. The observed pattern suggests that general reporting practices are relatively well established, but challenges remain in consistently applying the standards for diagnostic test accuracy reviews.

Salameh et al. reported that most systematic reviews of diagnostic accuracy published shortly after the PRISMA-DTA guideline failed to meet basic reporting standards, especially in items related to synthesis, protocol registration, and index test description [22]. Similarly, Willis and Quigley found that, even before PRISMA-DTA, reporting in DTA meta-analyses was weak, with fewer than half the studies fulfilling core PRISMA items [23]. Our analysis suggests that while general reporting practices have improved—particularly for items inherited from PRISMA 2009—authors still omit essential DTA-specific methodological details, which is consistent with recent findings [23]. These gaps are not trivial: failure to report synthesis methods, define diagnostic measures, or risk of bias and applicability restricts the interpretability and trustworthiness of the review.

Notably, reporting related to either meta-analysis methods or synthesis of results was generally appropriate. However, this adequacy may be overestimated, as the PRISMA-DTA item on methods only requires authors to “report the statistical methods used for meta-analyses, if performed,” and most included reviews did present pooled sensitivity and specificity. As such, it is expected that more detailed aspects (such as the specific statistical models employed, approaches to assess between-study heterogeneity, or exploration of study-level effect modifiers) were often insufficiently described. Nonetheless, these elements were not evaluated in our study, as they fall beyond the scope defined by the PRISMA-DTA checklist. In most cases, there was no meaningful discussion summarizing the evidence and its shortcomings (i.e., variation in test accuracy across different brands, settings, or patient populations), except for some salient reviews. Such practices are especially problematic in diagnostic test reviews, where performance can vary widely depending on clinical and methodological context [24,25].

Our findings align with prior evaluations suggesting that reporting study characteristics and discussing clinical heterogeneity remain rare in DTA reviews. For example, Naaktgeboren et al. noted inconsistent practices in handling reference standard variation and thresholds across primary studies, and later studies confirmed that heterogeneity is frequently acknowledged but seldom explored quantitatively [26]. Likewise, a recent meta-epidemiological analysis of Cochrane DTA reviews found that heterogeneity is often underreported, undermining the reviews' utility for clinical or public health decision-making [27]. Our findings reinforce that reviews on diagnostic accuracy must do more than report pooled results: they must transparently describe how and why test performance varies across contexts.

A promising response to these challenges is the REPRISE initiative (REProducibility and Replicability In Syntheses of Evidence), which includes developing a reporting guideline to complement the existing ones by focusing specifically on the reproducibility and transparency of meta-analytic methods [28]. Rather than offering a checklist for authors, REPRISE will be designed to support the critical appraisal of reviews by emphasizing key elements such as access to analytic code and data, clarity in data preparation and synthesis methods, and justification for analytic decisions. These elements are particularly relevant for meta-analyses of diagnostic test accuracy, where between-study heterogeneity, variable thresholds, and risk of bias can substantially influence results. Our findings—showing poor reporting of synthesis methods, limited description of the included studies, and near absence discussion on nuances of the pooled estimates—highlight the need for such structured guidance. By addressing what is reported and how reviews are conducted and can be reproduced, REPRISE represents a necessary evolution toward more reliable and decision-informing meta-analyses in diagnostic research and beyond.

Our study has some limitations. Our reviewer team comprised medical students with limited experience in methodological studies requiring systematic review procedures. Although they underwent extensive training (through weekly sessions, piloting of the extraction sheet, and continuous supervision by senior investigators), some variability may have been introduced in the study results. Additionally, we operationalized each PRISMA-DTA item into 79 subitems to enhance clarity and reduce ambiguity in assessment. While this approach allowed greater granularity, it also meant that an item was scored as non-compliant if any subitem was missing. This strict scoring may appear stringent, but we believe it aligns with the intent of the checklist and the principle that all elements within an item are essential to ensure complete and transparent reporting.

Improved editorial enforcement of PRISMA-DTA, coupled with the adoption of REPRISE by researchers, may help ensure that future reviews are not only transparent but also robust and actionable. Moreover, training review teams on diagnostic review methodology, promoting protocol registration, and encouraging integration of risk of bias and heterogeneity analyses into synthesis are essential steps forward. However, passive dissemination of reporting guidelines is insufficient to ensure meaningful improvement. To reduce research waste and enhance the utility of diagnostic evidence, the field must invest in structured interventions, such as mandatory author checklists, automated editorial checks, and targeted training resources that should be evaluated through randomized trials or other rigorous implementation research designs.